OneToFive. Val x = Array(Some(1), None, Some(3), None) // Array] = Array(Some(1), None, Some(3), None) Return a new sequence by applying the function f to each element in the ArrayĪ new sequence with the element at index i replaced with the new value vĪ new sequence that contains elements from the current sequence and the sequence s

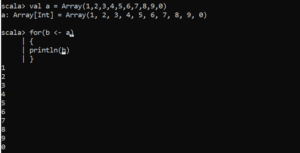

When working with sequences, it works like map followed by flatten Transforms a sequence of sequences into a single sequence : empty.lastĪt .last(Array.scala:197)īecause Array is immutable, you can’t update elements in place, but depending on your definition of “update,” there are a variety of methods that let you update an Array as you assign the result to a new variable: MethodĪ new collection by applying the partial function pf to all elements of the sequence, returning elements for which the function is definedĪ new sequence with no duplicate elements The first subset of elements that matches the predicate p The last element can throw an exception if the Array is emptyĪ sequence of elements from index f (from) to index u (until) Return the intersection of the sequence and another sequence s Return the first element can throw an exception if the Array is empty Return the first element that matches the predicate p Return all elements that do not match the predicate p Return all elements that match the predicate p Return all elements except the last n elementsĭrop the first sequence of elements that matches the predicate p Return all elements after the first n elements Return a new sequence with no duplicate elements These methods let you “remove” elements during this process: Method Instead, you describe how to remove elements as you assign the results to a new collection. Filtering methods (how to “remove” elements from an Array)Īn Array is an immutable sequence, so you don’t remove elements from it. Therefore, with : and :, these methods comes from the Array that’s on the right of the method name. The correct technical way to think about this is that a Scala method name that ends with the : character is right-associative, meaning that the method comes from the variable on the right side of the expression. This article will show you how to print an array in various ways using Scala. When we try to print the contents of an array in Scala, we get the output of the Array.toString method, which in most cases is the object hashcode. In Scala, an array is equivalent to Java native Arrays. I use that as a way to remember these methods. An array is a data structure/collection that stores similar data. Note that during these operations the : character is always next to the old (original) sequence. you need to assign the result of the operation shown to a new variable, like this: In many of the examples that follow - specifically when I demonstrate many of the methods available on the Array class, like map, filter, etc. You should generally prefer the Scala Vector and ArrayBuffer classes rather than using Array. I rarely use Array it’s okay to use it when you write OOP code, but if you’re writing multi-threaded or FP code, you won’t want to use it because it’s elements can be mutated.You can think of the Scala Array as being a wrapper around the Java array primitive type.Unlike the Scala ArrayBuffer class, the Array class is only mutable in the sense that its existing elements can be modified it can’t be resized like ArrayBuffer. The Scala Array class is a mutable, indexed, sequential collection. This page contains dozens of examples that show how to use the methods on the Scala Array class. show more info on classes/objects in repl.ToDF() is a simple method in both Scala and Python APIs that you can use to convert RDDs to DataFrames. In Spark, it is based on RDD, translating SQL and DSL expressions into operations. Convert RDD To DataFrame Using toDF()ĭataFrame draws inspiration from the Python package pandas. You can see the elements of the RDD thanks to the foreach() and collect() methods. We use a sequence in Scala and a list in Python to hold the data. In both examples above, we start a SparkSession of the same name and use the sparkContext.parallelize() method of a SparkContext object to create an RDD. appName("Convert Spark RDD to DataFrame and Dataset") \

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed